Code Analysis Tool for Solo Developers: No-Fluff Guide

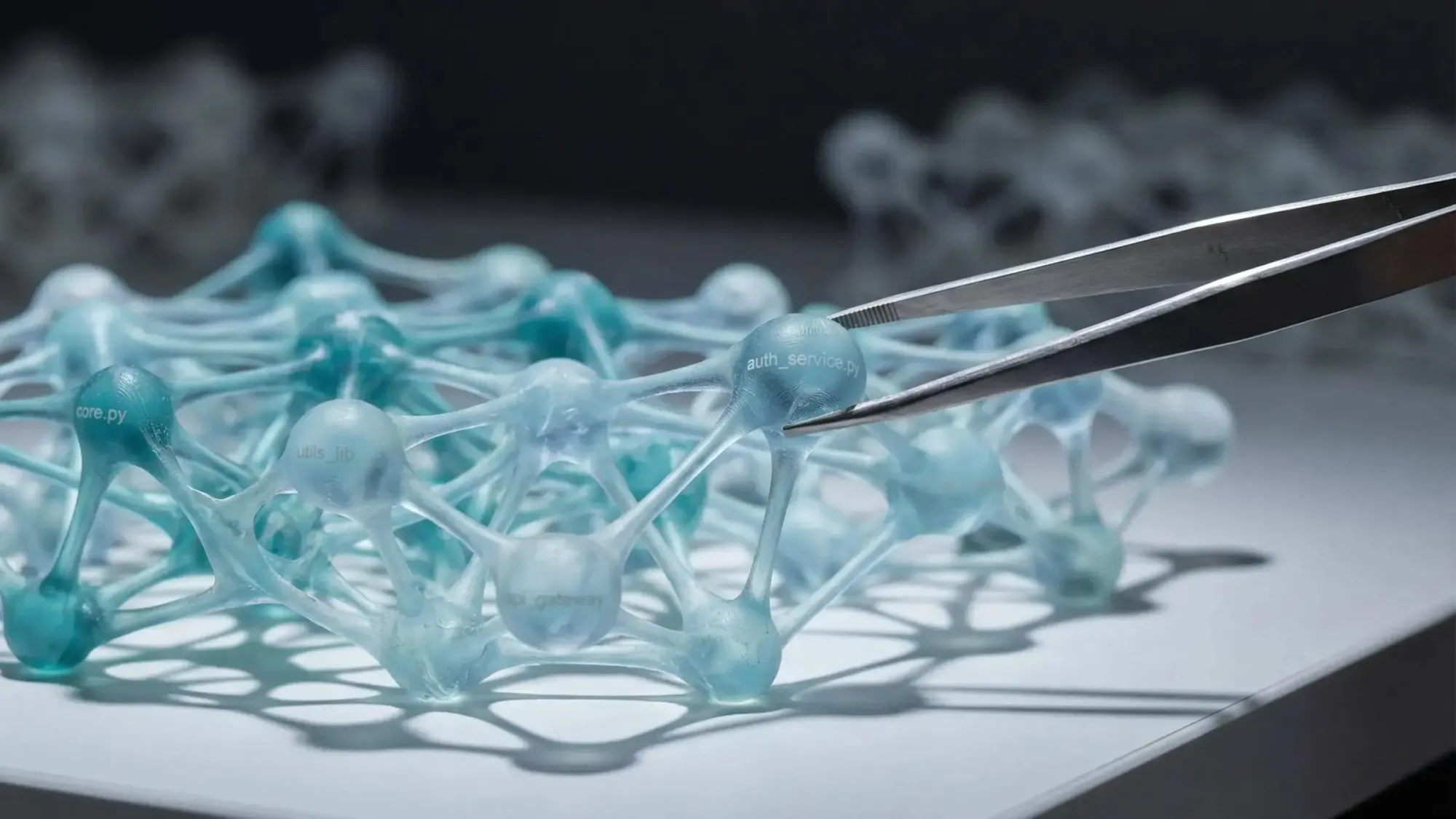

Code analysis tool for solo developers usually gets framed like linting with better branding. That's not the problem you actually have. The real problem is your agent edits 3 files, misses the shared utility, and quietly breaks an endpoint you forgot existed.

What matters is structural truth before you change anything. You need to know what depends on a function, whether new code is reachable, and where duplicate logic is already hiding (usually in the weird helper file).

A few things worth checking right away:

- Can it trace callers, endpoints, and cron jobs before you touch shared code?

- Can your agent query repo structure directly instead of burning tokens reading files blind?

- Can it show dead exports and duplicate logic without flooding you with style noise?

That saves you from fast, stupid mistakes.

Why Solo Developers Need a Different Kind of Code Analysis Tool

You ask Claude Code or Cursor to refactor a utility. It changes three files, everything looks tidy, and two hours later you realize five endpoints and a cron job depended on the old behavior. That’s the kind of bug solo developers keep paying for.

When you're building alone, you’re not just writing code. You’re holding product logic, architecture, release risk, and cleanup in your head at the same time. The problem isn’t usually syntax. It’s hidden structure.

Traditional code analysis often misses that reality:

- lots of style noise

- very little help with dependency impact

- weak support for duplicate logic detection

- feedback that arrives after the risky change already happened

That’s backwards.

A useful code analysis tool for solo developers should give you structural truth before you code, refactor, delete, or merge. The job is simple:

- find existing logic before you rewrite it

- see what breaks before touching shared code

- detect dead code before a cleanup sprint turns into a regression

- verify new code is actually connected to production paths

If you use AI agents every day, this matters even more. Fast code generation without codebase awareness just means you can create mistakes faster.

What a Code Analysis Tool for Solo Developers Should Actually Do

A lot of tools get thrown into the same bucket. They shouldn’t be.

For this audience, a code analysis tool for solo developers isn’t just something that points out bad style or suspicious lines. It’s something that helps you understand how the system fits together so your agent stops guessing.

Here’s the split that actually matters:

- Linters and formatters catch style, syntax, and consistency issues

- Static security tools look for known bug and vulnerability patterns

- AI review tools comment on diffs or changed files

- Structural codebase intelligence tools map relationships across the repo

That last category is the one most solo devs are missing.

The high-value jobs are usually structural:

- codebase mapping

- function search

- dependency tracing

- blast radius analysis

- dead code detection

- reachability checking

That changes your mental model. You’re not only asking whether code is clean. You’re asking whether it’s connected, reused, safe to change, and aligned with the rest of the app.

Good analysis reduces guesswork. Bad analysis adds alerts.

That’s the whole game.

The Main Failure Mode: AI Agents Work File by File, Not System by System

Most AI coding tools still operate one file at a time. They infer architecture from fragments. Sometimes they infer well. Sometimes they sound confident while being half blind.

That leads to familiar failure modes:

- duplicate utilities because the agent didn’t know similar logic already existed

- breaking changes because downstream callers were invisible

- orphaned functions or endpoints that never get wired into entry points

- planning docs that ignore the current architecture

- massive context waste from reading file after file just to answer a structural question

We’ve seen the same pattern over and over. A developer spends 40K tokens on blind exploration when the real need was a small structural summary or a direct dependency query.

You don’t renovate a building by guessing which walls are load-bearing. Codebases aren’t different just because they compile.

Most code review tools catch problems after code exists. For solo developers moving fast, that’s too late. The useful moment is before the refactor, before the deletion, before the agent invents a fourth helper that does what the second helper already did.

The Four Categories of Tools You Will Encounter

If you’re evaluating tools, sort them by job first. Not by marketing page.

1. Linters and formatters

These are fast, local, and worth having. They keep syntax and style tight. In Python, Ruff is a good example of the direction people want - one fast binary replacing a pile of slower tools.

Still, they won’t tell you:

- what depends on a function

- whether a change affects production paths

- whether a new feature is actually reachable

Useful. Not enough.

2. Static analysis and security scanners

These help with known issue classes, bug patterns, and security checks. They fit well in CI and they’re good when you need repeatable gates.

Their limitation is usually scope. They tend to work at the line or rule level, not the architecture level. If your main problem is transitive impact or duplicate business logic, they won’t carry much weight there.

3. Local AI review tools

These are strong when you want privacy, offline use, or low external API cost. Many focus on changed code only through a local CLI or local model runtime.

That can be great for diff review. It’s weaker for repo-wide structure. If the tool mostly inspects the patch, it may miss the callers, entry points, and module relationships that matter most.

4. Codebase intelligence tools for AI agents

This category exists for a different reason. The goal is architectural understanding.

If your real pain is hidden dependency impact, repeated logic, dead code, or agents making changes without seeing the whole system, this is the category to look at. Pharaoh is one example here. We parse supported repos into a graph and expose structural lookups over MCP so tools like Claude Code, Cursor, and Windsurf can query the codebase directly. More at pharaoh.so.

It’s not a replacement for linting or testing. It fills the missing layer.

What to Look for When Choosing a Tool

Most solo developers don’t need a giant scorecard. You need a short checklist that predicts whether the tool will actually save you time by the second afternoon.

Here’s the filter we’d use:

- Setup time

If time to first value is long, it’ll die in your backlog. - Language support

Obvious, but easy to miss when your repo is mixed. - Integration with your actual workflow

Claude Code, Cursor, Windsurf, Copilot, GitHub. If it sits outside the flow, you won’t use it consistently. - Architectural depth

Can it answer dependency and reachability questions, or is it just dressed-up text search? - Deterministic results

Structural questions need repeatable answers. LLM guesses are fine for drafting, not for impact analysis. - Cost profile

Are you paying per structural query, or mostly once at indexing time? - Cognitive load

Another dashboard is often a tax, not a tool.

The output matters too. A useful tool should return something actionable for the next step, like:

- affected callers

- impacted endpoints

- duplicate clusters across modules

- unreachable exports

- dependency chain for a target function

If the output is vague commentary, you’re still doing the hard part yourself.

The Minimum Stack That Actually Works for a Solo Developer

One tool won’t cover everything, and pretending otherwise wastes time.

The lightweight stack that tends to work looks like this:

- a linter/formatter for style and syntax

- a static or security scanner for known issue classes

- an AI coding agent for implementation speed

- a codebase intelligence layer for structural questions

That’s not heavy process. That’s just covering different failure modes with the right tool.

For linting, testing, and general code quality discipline, the [open source AI Code Quality Framework](https://github.com/0xUXDesign/ai-codebase-boilerplate) is a useful reference point. It helps on the quality side. It doesn’t solve architectural visibility by itself.

That missing layer is the real issue for a lot of solo teams. Not another generator. Better context for the generator you already use.

Where Most Tools Fall Short for Solo Builders

This is where people buy the wrong thing.

Local AI review tools are strong on privacy and cost control. They’re often good at reviewing the diff in front of you. They’re usually weaker at repo-wide dependency understanding.

Traditional static analysis is good for known patterns. It’s weak at transitive dependency tracing, duplicate business logic, and production reachability.

Search tools are good at finding text. That’s not the same as answering:

- what breaks if this changes

- what’s dead

- what’s actually wired into production

General AI assistants are great at writing code. They’re inconsistent at structural reasoning when they have to infer the system from partial reads. You can feel this in practice. The code sounds plausible. The repo says otherwise.

If your main risk is hidden architecture, choose a tool that models structure directly. Everything else is a partial fix.

The Structural Questions That Matter More Than Another Lint Warning

The useful questions are not abstract. They come up in normal work, often right before you make a mistake.

Before writing code:

- does this function already exist somewhere?

- are we solving the same problem twice?

Before refactoring:

- what depends on this utility or module?

- how far does the blast radius go?

After implementing:

- is the new function reachable from a real entry point?

- did we add dead code by accident?

During cleanup:

- which exported functions are unused?

- what duplicate logic should be consolidated?

During planning:

- what in the spec is missing from the code?

- where does the current architecture already support this feature?

These questions buy you calmer refactors, fewer regressions, and less duplicate work. They also make AI-generated changes easier to trust because you’re validating structure, not just reading output.

Confidence is not understanding.

That line saves time.

How Pharaoh Fits This Category Without Replacing Everything Else

We built Pharaoh as infrastructure for AI coding tools, not as a coding assistant, PR bot, or test runner.

The simplest useful description is this: we parse your repo into a queryable knowledge graph, then expose deterministic structural lookups through MCP. For supported repos today, that means TypeScript and Python parsed with Tree-sitter, stored in Neo4j, and made available through our GitHub app and MCP workflow.

That matters because structural questions shouldn’t require new LLM spend every time. After the initial mapping, graph lookups don’t carry per-query model cost. You’re querying structure directly.

The core capabilities relevant here are straightforward:

- function search

- blast radius analysis

- dead code detection

- reachability checking

- dependency tracing

That’s the layer to add when your AI can write code but still can’t see the whole building. More at pharaoh.so.

A Real Workflow: Using Code Analysis Before, During, and After a Change

Here’s a practical way to use this without turning it into process theater.

Starting in an unfamiliar codebase

First, map modules and dependencies. Then inspect the target module before changing it. Before adding a new helper, search for existing functions that already solve the same problem.

This is where duplicate logic starts. Not from laziness. From missing visibility.

Refactoring a shared function

Run blast radius analysis before editing. Identify direct callers, affected modules, and any production paths tied to that function.

A compact output should look something like this:

Risk: MediumDirect callers: 7Affected endpoints: /api/billing, /api/invoicesImpacted cron jobs: nightly_reconciliationTransitive modules: 4That’s enough to change how you work.

After implementing a feature

Run reachability checks. Verify exports are connected to endpoints, cron jobs, or other real entry points. Then use dead code detection to catch anything left hanging.

During a cleanup sprint

Look for duplicate logic across modules and unused exported functions. Reduce surface area safely. Small codebases get messy quietly.

Local Tools Versus Graph-Based Analysis: Which One Should You Use?

Use local tools when you mainly want private offline diff review, your repo is still small enough to hold in memory, and your main concern is changed lines rather than system topology.

Graph-based analysis starts paying for itself when:

- you use AI coding agents daily

- your codebase has enough modules that memory is no longer reliable

- you’re refactoring shared logic or planning architecture changes

- the expensive mistakes come from invisible dependencies

There’s a cost angle too. Local AI review can save cloud inference cost. Graph-based deterministic queries can save repeated LLM tokens on structural questions.

These approaches are often complementary. One helps review what changed. The other helps explain what the change means.

A Simple Evaluation Framework for Solo Developers

If you want a quick rubric, score any candidate tool from 1 to 5 on these:

- time to first value

- signal-to-noise ratio

- support for your language and workflow

- ability to answer architectural questions

- usefulness before changes, not just after

- recurring cost profile

- fit with your current AI agent stack

Watch for red flags:

- lots of warnings, little decision support

- vague AI commentary with no evidence

- no visibility into dependencies or production reachability

- another UI you have to babysit instead of something your agent can query

Judge the tool by outcomes. Fewer regressions. Less duplicated work. Lower token waste. That’s a better metric than how many checks it claims to run.

Common Mistakes When Adopting a Code Analysis Tool

A few mistakes show up again and again:

- Choosing based on hype instead of failure mode

Fix: start with the last few bugs or refactor misses and identify the pattern. - Expecting one tool to cover linting, testing, security, architecture, and generation

Fix: build a small stack with clear jobs. - Waiting until PR review to understand change impact

Fix: run dependency and blast radius checks before editing shared code. - Treating code search as dependency analysis

Fix: use search for discovery, not for impact decisions. - Assuming AI confidence means system understanding

Fix: ask for evidence, not tone. - Skipping reachability checks after implementation

Fix: verify new code is wired into a real execution path.

The fastest team is usually the one that checks the right thing at the right moment.

Who This Matters Most For

This matters most if you’re:

- a solo founder shipping production code with Claude Code, Cursor, or Windsurf

- on a small team where one person often merges their own work

- inheriting an older codebase with weak docs

- planning monorepo work or cross-repo consolidation

You may need less of this if your repo is tiny, the change risk is low, and your main need is formatting or language-specific style enforcement. Not every project needs a graph on day one.

But once you feel the codebase slipping out of your head, you need more than memory.

What to Do This Week if You Want Less Guesswork

Keep this simple.

- Write down the last three bugs or refactor mistakes that cost you time.

- Classify them: style issue, security issue, or structural blind spot.

- Add one tool for syntax and quality discipline, and one for architecture visibility if that’s the bottleneck.

- Before your next refactor, answer these four questions explicitly:

- does the function already exist

- what depends on it

- what becomes unreachable if it changes

- what code can be deleted after

- If you use Claude Code or another MCP-compatible agent, consider adding a codebase graph so the agent stops exploring blindly. Pharaoh does this for supported repos and workflows through MCP at pharaoh.so.

The right code analysis tool for solo developers should reduce uncertainty, not add ceremony. The shift is from file-by-file guesswork to system-level awareness.

Audit your current workflow for one missing capability - duplicate detection, blast radius, reachability, or dependency tracing - and close that gap first. That’s usually where the calm comes back.