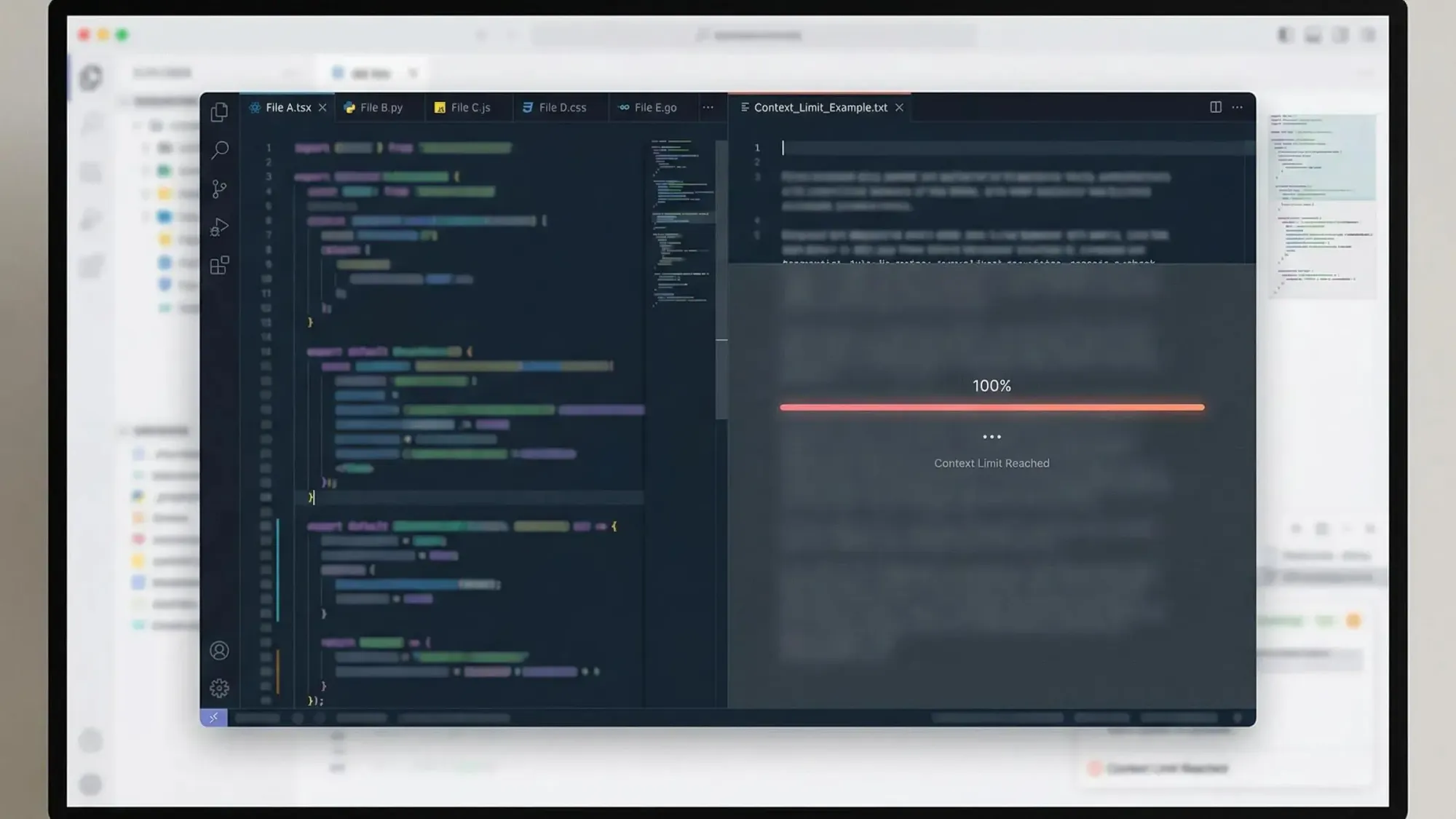

Context Window Limits Impact AI Coding Quality

Context window limits impact AI coding quality in every workflow, and it’s something we face daily as developers using agents to build and ship real products.

Repeated bugs, duplicate logic, and blind spots are frustrating but common.

Your focus is on moving fast, not patching agent errors, so we created a guide to help you cut through limits and get architectural clarity:

- Understand how context window limits ai coding quality in practical, session-level terms

- See research-backed reasons why performance degrades well before advertised token limits

- Explore actionable solutions using knowledge graphs and MCP to ground your agents in real repo architecture

Understand What Context Window Limits Mean for AI Coding

Context windows set the ceiling for every coding task you throw at your AI. These limits decide if your agent truly “knows” your repo or just guesses based on highlights.

Why context windows shape your entire workflow:

- The token limit includes all your prompts, files, command history, and model output. When that’s full, quality drops hard. Your agent may skip files or cut off instructions.

- Real-world context limits are much lower than vendor hype suggests. Even with gigantic numbers (200K, 1M tokens), you’ll run into gaps. For most teams, it’s 4K–8K tokens, which often means one medium file at best.

- The more context sources you bring in—diffs, PRs, docs, editor state—the faster you hit walls. That means less cross-file reasoning and more missed connections in your code.

- Blind spots form where the agent can’t “see.” When your LLM only gets a slice of your repo, entire integrations or dependencies go unseen.

Let’s drill into why this happens, how it affects code quality, and what’s actually being squeezed out of your agent’s context window.

Real-World Impact of AI Context Limits

- Practical context windows are a fraction of what you expect. You lose room for crucial chat history and outputs.

- The biggest space-hogs include open source files, large diffs, several active editor contexts, and long-running conversations.

- Enterprise studies show that most AI tools actively process just 2–3 files at a time before fragmentation sets in. That’s not enough for modern SaaS or microservices codebases.

- Even newly announced 64K+ token models still choke once overhead eats the real estate.

- When you expand context windows (to 200K or more), you do see better refactor performance. Teams finish big changes with 35–50% less grunt work.

- Session overhead is a killer. You need to budget for output tokens and repeated instructions, not just “load everything.”

AI tools miss what they can’t see. Large context windows fragment in practice, capping quality and breaking reliability.

Explore How Context Window Limits Create Common AI Coding Failures

You just want your AI dev to avoid breaking things, reusing code, and remembering your architecture. But with tight context windows, quality slips.

Failures caused by context truncation hit every indie team:

- AI rewrites code that already exists. It can’t see your utility functions when crowded out by other files.

- Instructions get chopped or lost. Result? Functions show up with no valid call sites and broken logic.

- The more files and dependencies, the more likely your agent is blind to test coverage, rules, and relationships.

Stories from real-world teams reinforce this. Enterprise assistants with tight windows hit 40% manual rework on cross-file integrations. That’s a trust and productivity tax you shouldn’t pay.

Common mistakes when context windows fill:

- Callers, interfaces, and helpers disappear. AI writes stubs or orphaned code that never compiles.

- Extra or duplicate logic pops up because critical context sits out of view.

- Anything in the middle or end of a long context is rarely found or used. The AI cares most about what’s “up front.”

- Retrieval and ranking failures skew logic. AI grabs random, potentially outdated lines, missing real dependencies.

Every codebase eventually suffers when your model can only parse a sliver at a time. More context sources, fewer clear answers.

Examine Why Bigger Context Windows Do Not Guarantee Better Outcomes

Bigger isn’t always better. Tossing megabytes of context at your model gives you sprawl, not insight.

You get diminishing returns as window size increases. Models forget important details from earlier in the context. Past a certain point, context “rot” sets in. Long-term repo decisions, API contracts, or architectural constraints evaporate within a bloated prompt.

Why this hits every high-context workflow:

- Benchmarks like LongCodeBench show that going from 29% to 3% recall as context grows is common for even cutting-edge models.

- “Context rot” is real. Models overweight the last few interactions and lose sight of module-level instructions.

- As you add more tokens, your signal-to-noise ratio craters. AI pulls in comments, old tests, stray logs that water down quality.

- Teams hit consistent ceilings, no matter what context window is claimed in the docs.

- Quality depends on what you load and how you select—not on unfiltered bloat.

Performance drops sharply before advertised token limits, making “just increase the window” a poor quality strategy for solo founders and lean teams.

Analyze The Impact of Context Window Limits on Team Productivity and AI Trust

Every missed detail chips away at your trust in automated coding. When you start double-checking every suggestion, productivity falls apart.

Let’s get clear about the cost. Small errors stack up—duplicate logic, missed integrations, broken deploys. Fixing these means time lost, more friction, and slow adoption of agents in real dev cycles.

Productivity and Trust Losses When Context Limits Bite:

- Redundant implementations waste hours. Missed reusability slows your roadmap.

- Chasing regressions and fixing missed contracts eats review time. This puts pressure on solo founders and small teams who can’t afford rework.

- Developers get “quiet anxiety” when assistants forget requirements or hallucinate function calls. That leads to more manual checks, slower release cycles, and second-guessing your AI agent.

- Teams reporting broader, persistent code visibility cut manual refactor work up to 50%. Meanwhile, teams stuck with narrow windows hit double-digit integration rework rates.

Small mistakes compound. Incomplete AI awareness slows innovation and increases risk for fast-moving teams.

Answer Frequently Asked Questions About Context Window Limits and AI Coding Quality

Developers have sharp, practical questions. Here’s what matters most as you push AI coding agents into your workflow:

Top FAQ for teams depending on AI for coding quality:

- Can a bigger context window replace architectural knowledge? No. Only structured systems like knowledge graphs encode relationships and invariants. Raw token count cannot deliver deterministic, queryable understanding.

- What chews up space in a typical dev session? Code files, diffs, previous conversations, system prompts, code snippets, PR comments, and output. Even just swapping between tasks rapidly exhausts your window.

- Why do agents need more than just “more memory”? Because models forget, they get recency bias, and context rot is real. You need retrieval, ranking, and persistent memory (like constitution files or indexed graphs). These create a repeatable ground truth for your entire team.

For the deepest architectural answers, deterministic graph lookups and explicit representations always outperform token stash alone. This is why teams now reach for memory-backed, context-aware infrastructure to backstop their AI agents with actual repo intelligence.

To build real trust in AI code generation, you need more than scale—you need persistent, structured architecture knowledge to eliminate silent failure.

Introduce Architectural Intelligence as the Solution to Context Limits

Most teams hit the context wall quick. Bigger models don’t cut it. You need a new way to give AI the full story—one that’s fast, precise, and works across your stack.

Architectural intelligence fixes what token limits break. That’s why we built Pharaoh: to turn your repo into a living knowledge graph, not a random pile of vectors or prompts.

Pharaoh uses tree-sitter to parse your TypeScript and Python, then builds a Neo4j graph so every module, dependency, endpoint, and job is instantly searchable. Through the Model Context Protocol (MCP), agents can grab actual structure—NOT just guess from output.

Here’s what real repo intelligence looks like in action:

- Jump from interface to dependency, and see every connection—no matter how big your repo is.

- Map function signatures, endpoints, cron jobs, and environment variables so agents never fly blind or duplicate work.

- Instantly analyze blast radius or find all reachability for a critical function.

- Integrate seamlessly with Claude Code, Cursor, Windsurf, or GitHub—your workflow stays agile.

The result is zero-marginal-cost queries with no token waste. Your agent thinks with context, not clutter.

Build your agent’s “memory palace.” Let your AI code with ground truth, not a foggy window.

Compare and Contrast Architectural Context With Vector-Only and Large Context Approaches

Let’s break down where each method works—and where it falls apart.

Vector search, pure-token, and knowledge graphs each have a best-fit but only one delivers repeatable results for deep AI coding:

- Knowledge graphs (like Pharaoh’s) enable exact, queryable insights: trace dependencies, spot duplication, analyze blast radius, and cover real project scope at warp speed.

- Vector search finds “semantically similar” chunks, but stumbles with ambiguity. Great for quick snippets; risky for architecture audits or cross-cutting changes.

- Large context LLMs consume more memory, but retrieval quality nosedives before the window fills. These setups miss full connections and bury key context.

Case in point:

When you want to find out every owner of a duplicated function or assure full PRD–implementation alignment, graphs spit back answers instantly. With vector or context window bloat, you drown in partial results and risk missing critical links.

For detailed repo tasks—blast radius checks, cross-repo search, exact function chaining—knowledge graphs pull ahead. See for yourself: open source frameworks for agentic repo quality are now built on structured context, not just token scale.

Graph-based context means exact answers, less guesswork, and cleaner integrations—every time.

Share Best Practices for Working With Context Windows in AI Coding

If you’re relying on AI to code for you, you need repeatable habits to prevent context sprawl and failed suggestions.

Top tactical moves to crush context window headaches:

- Run tracked, two-stage contexts: First, retrieve key files; then, rank and prune by relevance. Set a strict budget for token use up front.

- Use combined retrieval: Keyword for precision, embeddings for recall, graphs for structure, editor context for instant intent.

- Store project rules, API contracts, and architecture decisions in external “constitution” or memory files—don’t let your best knowledge get lost in chat history.

- Prune session bloat. Start fresh when you hit noise, or use agent handoffs so no single window needs to carry the full load.

- Add guardrails: simple compile checks, static analysis, and symbol-existence verification spot hallucinations before review.

- Adopt repo mapping tools (like Pharaoh) for blast radius scanning, ownership lookups, and dependency awareness. These always beat “just toss more tokens” for quality.

Mix these strategies to keep suggestions sharp, reviews quick, and agents useful right up to production.

Outline the Future of Context Windows and AI Coding Agent Workflows

Developers are shifting away from brute-force memory expansion. The future is lean, structured, and persistent—built on blueprints, not guesswork.

Emerging best practices for agentic workflows:

- Start with knowledge graphs as the backbone. These bring clarity and instant answers, even for sprawling legacy systems.

- Embed recursive LLMs and managed memory to keep agents context-rich without bloating tokens.

- Lean on explicit architectural protocols (like MCP) so that agents ask, verify, and build on ground truth—no more silent rot.

- Integrate layer-by-layer workflow patterns: pull structure, dive into details, produce action plans through summary agents.

Agents get smarter, your codebase gets safer, and productivity scales with every new tool that plugs into structured context.

The best teams supplement AI memory with blueprints and repeatable repo maps. Context window hacks won’t keep up.

Conclusion: Move Beyond Token Limits to Achieve AI Coding Quality

Context limits cap your AI’s ability to deliver real results. Reliability, trust, and speed all depend on more than memory—they demand persistent, structured codebase context.

Don’t settle for wasted suggestions or bloated tokens. Use systems that make repo architecture transparent, repeatable, and actionable for your agents.

Pharaoh gives you architectural memory from day one. Try our free tier, load your repo, and let your agent code with confidence. If you want your AI tools to hit production standards, demand answers, not guesses.

Aim higher than token limits. Start building with architectural intelligence and leave context clogs behind.