How to Prevent Regressions with AI Coding for Small Teams

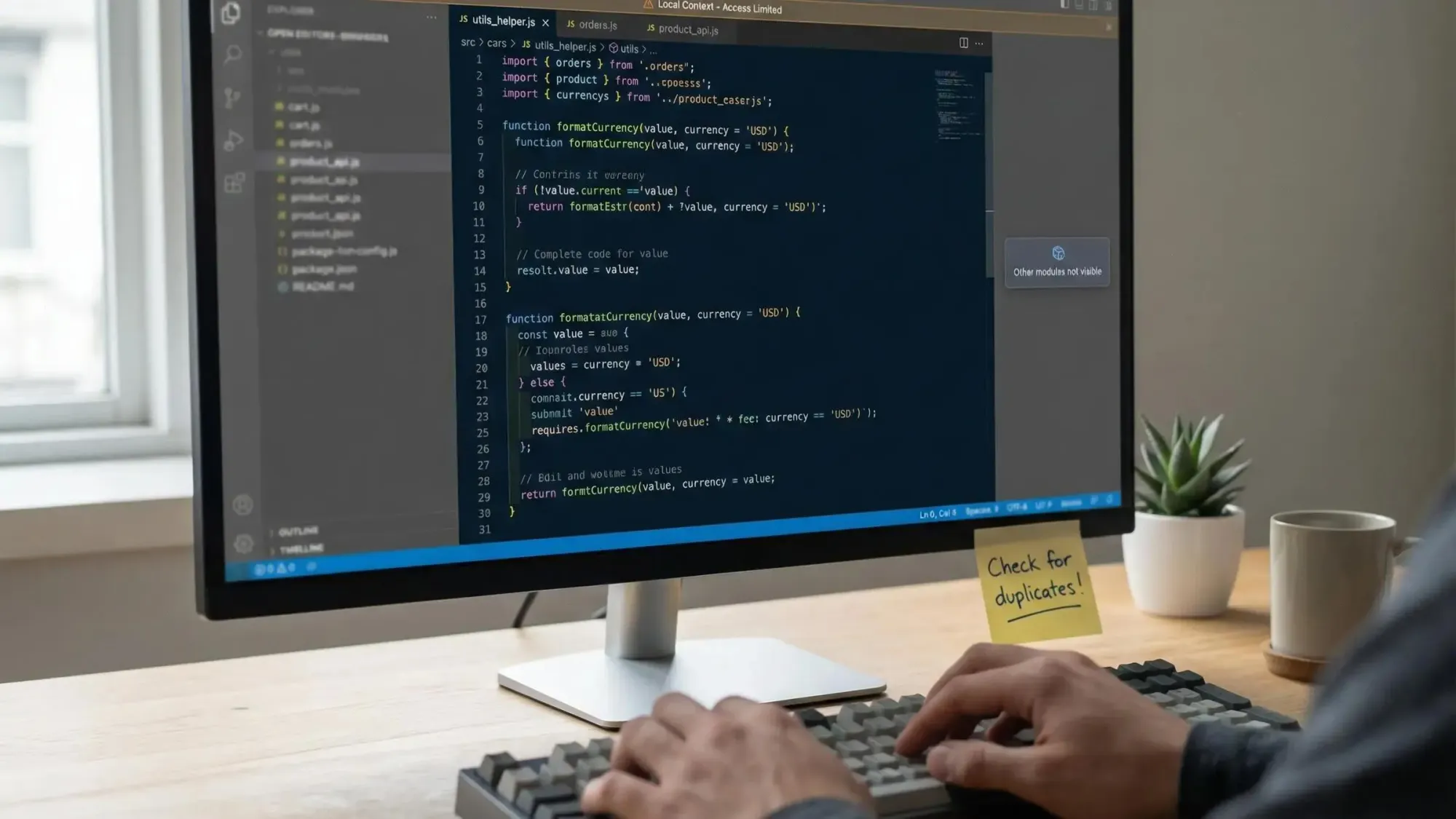

How to prevent regressions with AI coding is a challenge every developer using Claude Code, Cursor, Windsurf, or GitHub Copilot knows well. When your agents speed through updates, silent breakage, duplication, and context gaps can cost you days or weeks.

We understand how high the stakes can feel, so this guide shares proven strategies and new workflows, including:

- Practical ways on how to prevent regressions with AI coding during fast-paced development

- Structuring your codebase into a live, queryable knowledge graph for agent context

- Guardrails and checks to stop silent breakage, duplicate logic, and architectural drift before they reach production

Understanding Regression in Small Team AI Coding

Every developer pushing code with an AI assistant has faced it: something silently breaks, a performance cliff appears, or a messy duplicate turns reviews into wild goose chases. Regressions in agent-driven workflows hit small teams the hardest because you move fast and own everything.

Common Regression Risks When Coding with AI

- Regressions are changes that cause working features to fail, degrade, or act unpredictably.

Result: Bugs surface post-merge, often in areas not covered by basic test cases. - AI agents like Claude Code or Copilot work on whatever context you provide.

Best-fit: Ideal for rapid change, but miss dependencies in side files or hidden modules. - Without a holistic, queryable codebase, they miss “invisible” links—things like custom constraints or deep architectural patterns.

Proof point: Industry research flags LLMs for hallucinating imports and missing domain constraints, producing builds that don’t even run. - Small teams have little review overhead and test coverage gaps.

Result: Regressions can linger, especially in dead zones like admin paths or less-used features.

When you rely on “open file + context window” development, the odds of missing something grow. Incremental tests and atomic PRs help, but they won’t catch structural risks you can’t see. This matters for any founder tired of chasing silent breakage weeks later.

The fastest way to ship confidently with AI agents is to make your codebase instantly queryable and always up-to-date.

Why AI Coding Agents Increase Regression Risk

AI tools accelerate delivery, but there’s a price: local knowledge and short-term memory. Agents generate code based on partial context—usually just the files you feed them. That means one thing for your repo: structural drift.

- Localized context means agents patch what they see and duplicate what they don’t.

Result: Unchecked, your codebase balloons with clone-helpers and missed API contracts that break at runtime. - Over 75% of small teams using code agents run into regression bugs during shipping sprints.

Ideal use: Tactical changes, not long-term maintenance or cross-module refactoring. - “Vibe coding” happens when you trust output based on surface-level review.

Insight: This is the root of silent breakage—code “works” in a limited context but doesn’t fit the whole system.

Pharaoh builds architectural intelligence for agent workflows, targeting this weak spot. By letting agents “see” your codebase as a structured graph, you cut out local-only patches, crush duplication before it starts, and keep drift in check no matter how fast you or your agent move.

Recognizing the Core Failure Patterns of AI Code

AI code fails in ways that are systematic and hard to spot through file-by-file reviews. Without structural context, issues cluster and repeat, eating time and trust.

Key Systematic Failure Patterns

- Duplicated logic: Agents produce helpers with small variations, making future fixes slower and riskier.

- Broken or brittle APIs: Signatures or implicit contracts change. Downstream chaos erupts when nobody catches it.

- Orphaned endpoints: AI deletes wiring, leading to dead code or missing features.

- Edge cases missed: AI skips rare-path handling, leaving holes tests rarely cover.

- Security or validation gaps: Model forgets domain rules, leading to subtle vulnerabilities.

These problems aren’t like simple “fat finger” mistakes. They arise because AI only knows what’s visible—and if code structure is hidden, so are the risks. You'll spot patterns: sudden complexity jumps, unexplained test failures, and blocks of code showing up in multiple locations.

Perform regular system-aware checks to prevent repeat failures that sabotage productivity and stability.

Adopting an Architecture-First Mindset Over Token-Based Coding

Small teams using file-by-file LLM workflows hit a context wall. You need structure as a first-class citizen, not just search. Architecture-first means your code isn’t just text—it’s a graph of functions, endpoints, services, and flows your AI can actually understand.

- With a knowledge graph, every function, dependency, or endpoint is a fact, not a guess.

- Agents grounded in structure answer real questions: “Who uses this?” “What breaks if I change that?”

Ideal for: Refactors, system upgrades, and feature additions where scope matters. - Zero-token, deterministic queries beat per-question LLM calls.

Proof: Teams report 30–40% faster discovery, 25–35% better AI agent accuracy, and drastically fewer “ghost regressions” post-launch.

When agents query a structured source, they surface what already exists, spot conflicts, and stop duplication before it starts. Your feedback loop gets tighter. You move faster without giving up control.

Mapping the Codebase as a Living Knowledge Graph

You can’t protect what you can’t see. That’s why the smartest teams make their repo fully queryable—a living digital twin with nodes for modules, functions, endpoints, and relationships for their dependencies and connections.

- Use deterministic parsing (like tree-sitter) to map every function, module, and endpoint accurately.

- Push that structure into a Neo4j graph for fast, repeatable lookups and queries.

- Keep the map fresh with automated re-indexing on every PR or nightly job.

With Pharaoh, you get this out of the box: parse your TS/Python repo, auto-map modules, endpoints, cron jobs, and environment variables, and expose it through MCP. Your agents get 13 tools to trace blast radius, find dead code, or check reachability—so you eliminate blind spots. Support for self-hosted deployment keeps private knowledge secured, while permission-aware retrieval ensures agents only see what they should.

Every small team can now give their agents full context—without drowning in manual config or slow code search.

Integrating Architectural Intelligence into Your Workflow

You need instant access to facts, not just guesses, when you code with agents. When agents can query actual code structure, their changes go from risky to reliable. This unlocks a smarter workflow for solo devs and small teams.

- Query-before-you-ship: Trace blast radius before refactors, check reachability before deleting, and map function usage before edits.

- Use MCP endpoints with Claude Code, Cursor, or Windsurf so all agent actions share the same trustworthy context.

- Real-time, zero-token queries mean you check scope in seconds, not minutes, without burning compute or tokens.

Plug pre-flight graph checks into pre-commit or CI, so every PR and review is agent- and human-friendly. You catch impact, not just syntax or static smells. Permission controls and auditability features keep governance simple for teams with strict requirements.

When agents build on a living architectural backbone, your speed stays high, but silent breakage drops.

Practical Guardrails: Policies, Checks, and Automated Gatekeeping

Smart teams rely on more than written docs to prevent regressions. You need real guardrails that catch breakage at the source and flag risks without slowing your flow.

Blast radius checks, reachability verification, and dead code detection shift quality left. They protect you before merging, not after an incident. Setup is simple but powerful.

Guardrails That Actually Prevent Regressions

- Blast radius checks: Catch changes that will impact critical services or modules.

Result: No surprise outages or angry DMs about “what broke the thing.” - Reachability verification: Warns if a PR deletes endpoints, APIs, or helpers still in use inside or outside the repo.

Proof: Cuts dead code without breaking working features. - Dead code and duplication checks: Uncover orphaned or copy-pasted logic to keep tech debt in check and reviews focused.

- CI gates with coverage enforced: Force all critical code paths to get tested before merge, even if that means pushing back a ship date.

- Rollback workflows: Auto-trigger rollbacks or feature flags based on SLO burn rates or error surges.

Best-fit for: Aggressive agents and rapid releases.

For speed, focus on actionable, near-instant feedback, not endless waiting. Use PR Guard-style hooks as a safety net—you get sub-10 minute checks that allow you to keep moving without risking silent regression.

Policies alone don’t cut it. Automated, structure-aware checks deliver results and peace of mind for small, AI-powered teams.

Preventing Common Regression Traps in Agent-Driven Development

You want actionable, repeatable steps that catch regressions before they hit main. We built this list exactly for small teams coding with agents and scaling fast.

Your Pre-Merge Regression Prevention Checklist

- Aim for atomic, single-purpose PRs—easy to revert, easy to audit, fewer merge headaches.

- Always run the local and CI test suite. Never skip static analysis or coverage gates.

If code isn’t covered, it’s a ticking bomb. - Require a real code review. Scan every line, especially agent-changed logic, for behavioral drift, domain misses, or subtle contract breaks.

- Query the knowledge graph before merging: Check which downstream clients, endpoints, or tests will break.

Eliminates “didn’t know that was used in prod” surprises. - Keep agent actions reproducible: Log prompts, context, and responses for every auto-edit—fast to debug or roll back if needed.

- Prefer feature flags and automated rollback triggers for risk-heavy changes.

- Include load testing and adversarial inputs so you spot scale or security regressions early.

Following this process makes agent-powered coding faster and safer—no more one-off fixes that become permanent landmines.

Checklist-driven development stops regressions and builds habits that scale as your codebase and agent activity grow.

Evaluating Tools and Choosing the Right Solution

Every small dev team faces choice overload. When picking tools to prevent AI agent regressions, focus on system coverage, cost, and actionable checks. Not all solutions work for deep, multi-repo, or high-velocity agent-driven work.

What to Demand in Regression-Prevention Tooling

- Ability to detect cross-repo impact and hidden dependencies—essential for monorepos or microservices.

- Seamless integration into CI, PR, and local dev flows.

If setup is slow, adoption tanks fast. - Self-hosting and permission controls for private code.

You retain governance and full audit logs. - Deterministic, graph-based queries over per-LLM prompt costs—scales with team and repo size.

- Metrics for retrieval quality (Precision@10, Recall@50, MRR, F1) to prove the solution is doing real work and not just adding sales noise.

Pharaoh is built for this reality. We use deterministic graph queries, support self-hosted deployment, integrate directly with agent tooling and CI, and focus on the high-quality, structure-first insights that small AI-native teams actually need.

When in doubt, pilot a repo and see if duplicates, dead code, or hidden impacts surface in minutes—not days.

Measuring Success: Reducing Regressions and Building with Confidence

To know your process works, track outcomes that actually matter. Don’t drown in dashboards or vanity metrics. Own a short list of numbers tied to business impact and engineering velocity.

Metrics That Prove Your Regression Prevention Works

- Track regression rate (bugs per release or sprint). Look for measurable drops after adding architectural guardrails.

- Cut review cycle time—can you get closer to same-day merges without breakage?

- Lower cyclomatic complexity and code duplication over time.

Proof of less technical debt and higher agent code quality. - Track time from “bug found” to “fix live.” Aim for short, predictable timelines.

- Improve onboarding speed—measured by how quickly a new dev (or agent) can make meaningful contributions risk-free.

If you see these numbers trending in the right direction, your system is working. Our users see 30–40% faster knowledge discovery and 25–35% more accurate agent recommendations with Pharaoh’s knowledge graph approach.

Focus on metrics that lead to faster shipping with less regret and lower support burden per release.

Frequently Asked Questions on Preventing Regressions with AI Coding

AI-native devs want clarity, not theory. Here are direct answers from the field.

- AI regressions often repeat because the model lacks true global context. Human regressions are more random, based on fatigue or rushed decisions.

- You should refresh your knowledge graph on every PR, with a nightly full re-index as backup.

- Self-hosted graphs are secure for private repos—Pharaoh supports this today, with granular access controls by source and scope.

- Not using TypeScript or Python? Tree-sitter supports many languages, and you can extend or combine parsers.

- Monorepos and cross-repo work? That’s a core payoff—graph relationships show all reachability and duplication, even across service lines.

- This approach supplements code review. Human sign-off stays the last gate. The graph just makes reviews smarter, faster, and safer.

- Governance is built-in. Every structural query and agent action is auditable for compliance-driven teams.

Mix AI, structure, and disciplined process for the safest, fastest agent-driven coding possible.

Conclusion: Moving from Guesswork to Proactive Control

Preventing regressions with AI coding isn’t luck or guesswork. It’s about discipline, architecture, and instant access to real codebase facts. Give your agents the knowledge graph, double down on guardrails, and flip fear of breakage into confidence to scale. Pharaoh gives you the map—now build with speed and certainty.