Knowledge Graph for Code Explained: A Practical Guide

Knowledge graph for code explained sounds abstract until your agent renames one helper, misses two downstream callers, and quietly breaks a cron job. That's the real problem: AI tools can write code fast, but they still miss structure when the repo only looks like files and folders.

What matters is whether you can answer the questions that usually burn an afternoon (or a Friday deploy): what calls this, where does it enter production, and does the same logic already exist somewhere else.

A few things worth getting straight first:

- A code graph is about relationships, not "smart search"

- Blast radius beats file reading when you're changing shared code

- Reachability is the check people skip, then regret later

Read this and you'll stop guessing.

The Problem AI Coding Tools Still Have

You’ve probably seen this one already. You ask Claude Code, Cursor, or Windsurf to refactor a shared utility. It changes the obvious files, the diff looks clean, tests pass locally, and then some unrelated endpoint breaks because the agent never saw the callers two hops away.

That pattern keeps repeating because the weak point usually isn’t syntax. It’s structure.

The failure modes are familiar:

- the agent writes a new helper even though near-identical logic already exists in another module

- it makes a breaking change because it didn’t trace downstream dependencies

- it adds code that never gets wired into an endpoint, job, or actual runtime path

- it burns half the context window reading files just to build a shaky mental model

File-by-file exploration works for toy repos. It gets ugly fast in a product codebase where the same concept shows up across routes, jobs, services, and shared modules. Solo founders feel this early because there’s no spare person doing architecture babysitting. Small teams feel it by the second afternoon of a refactor sprint.

The core issue is simple: AI tools often explore code like a stranger walking room to room with no blueprint.

A knowledge graph for code turns that blueprint into something queryable before an agent edits anything.

What a Knowledge Graph for Code Actually Is

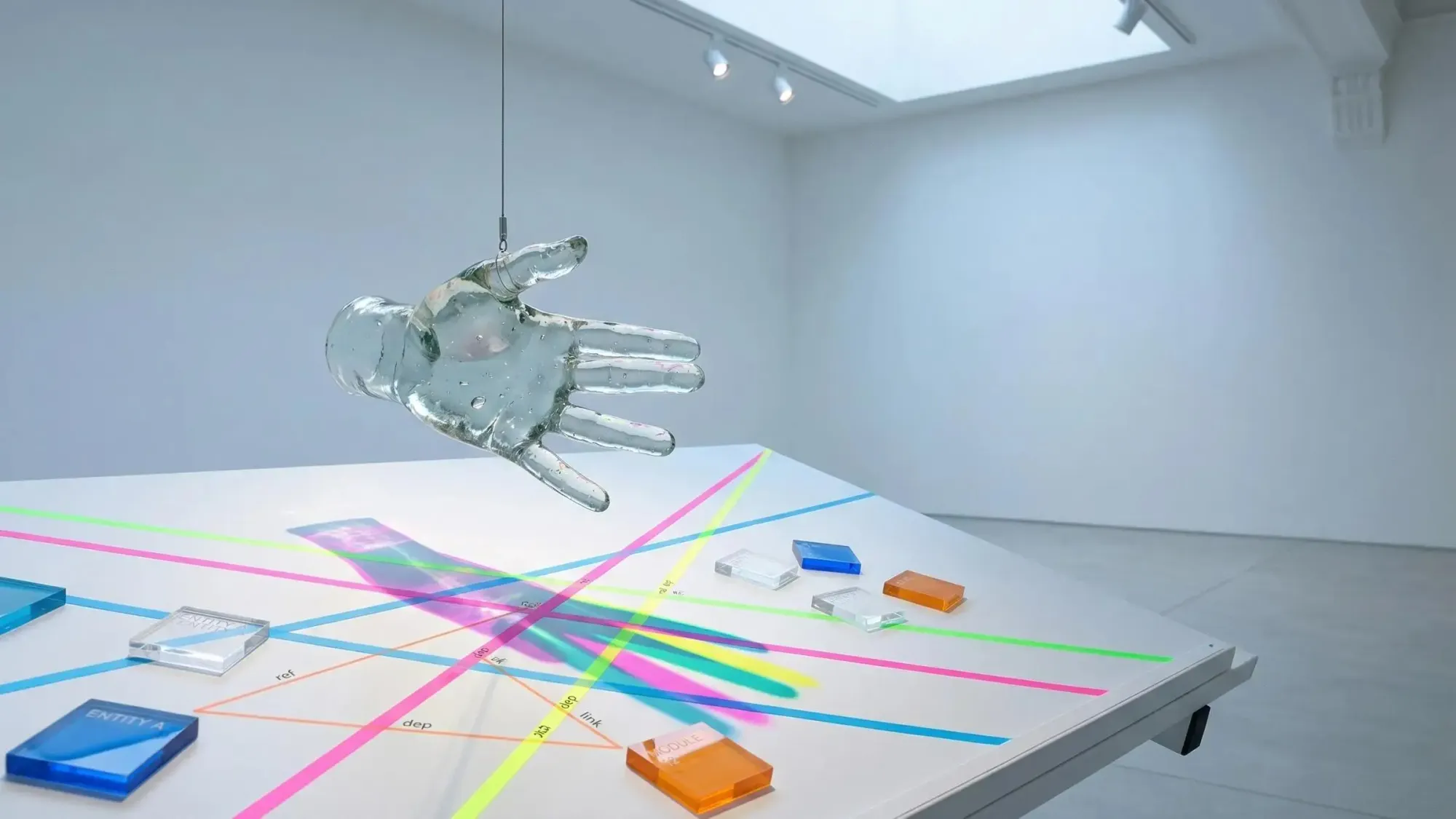

Plain version: a knowledge graph for code is a structured map of code entities and the relationships between them.

The entities become nodes. The relationships become edges.

Typical nodes include:

- functions

- files

- modules

- endpoints

- cron handlers

- environment variables

Typical edges include:

- calls

- imports

- exports

- depends on

- owned by

- reachable from

That sounds abstract until you use it to answer actual engineering questions. Not “find this string,” but “what depends on this function?” or “is this export reachable from a production entry point?”

That’s the difference.

A graph is not just a diagram. It’s not just a search box either. It gives you traversable relationships.

Here’s the practical split:

- AST gives syntax structure

- grep gives text matches

- vector search gives similarity

- a code knowledge graph gives architectural relationships you can walk

If grep finds formatCurrency, that tells you where text appears. If a graph shows formatCurrency -> BillingService -> /api/invoices -> nightly reconciliation job, you can reason about blast radius. Very different job.

Good code context is not more text. It’s better structure.

Why Developers Search for This Now

This topic matters more because AI coding moved from “help me write a function” to “help me operate a codebase.” Once agents started touching refactors, reviews, and wiring work, structural blindness became expensive.

The MCP shift made this sharper. Instead of forcing a model to infer everything from raw files, we can give it tools that answer specific questions. That’s a better pattern. Less guessing, less theater.

The mental move is small but important:

- stop prompting harder

- start giving the agent a map it can query

We’ve found that confidence in AI-assisted development comes from context quality more than model quality. A stronger model with poor context still makes clean-looking mistakes. A decent model with the right structural map often makes calmer decisions.

So when people search for knowledge graph for code explained, they’re usually not chasing theory. They want a way to stop rediscovering the same repo architecture every session.

How a Code Knowledge Graph Is Built

The pipeline is pretty straightforward when you strip away the buzz.

- Connect a repository.

- Parse source files.

- Extract entities like functions, modules, imports, exports, and endpoints.

- Resolve cross-file relationships.

- Store the result in a graph database.

- Query it through tools or APIs.

The key part is deterministic parsing. Tools like Tree-sitter read source code structure without guessing. That matters because the graph should come from actual code relationships, not from a model improvising what it thinks the repo probably does.

Some systems store the graph in databases built for relationship traversal, such as Neo4j. That’s a good fit because software architecture is mostly a relationship problem.

The graph itself is usually metadata about the codebase, not code generation logic. Different layer. Different job.

For a concrete example, Pharaoh parses TypeScript and Python repos with Tree-sitter, stores structural relationships in Neo4j, and exposes queryable insights to AI tools through MCP. That gives agents a way to ask about codebase structure directly instead of reading half the repo first.

What Questions a Knowledge Graph Can Answer That File Search Cannot

This is where the value gets obvious. Most useful codebase questions are relationship questions, not text lookup questions.

A graph helps with codebase mapping:

- what modules exist

- how are they connected

- where are the hot spots

It helps with function search in a way grep can’t:

- does this logic already exist somewhere else

- where is the real implementation versus a re-export

- what calls it in production

It helps with blast radius:

- what breaks if we rename this function

- which modules depend on this file

- what endpoints or jobs are affected downstream

It helps with dead code detection:

- which exports are never called

- which modules are imported nowhere meaningful

It helps with reachability:

- is the code we just added actually connected to an endpoint, cron, CLI task, or worker

- did we build a feature or just write a dead branch

And it helps with dependency tracing:

- are these modules tightly coupled

- do we have circular imports

- where does the coupling actually start

Some teams also use graph-backed comparisons to spot spec or docs drift at a high level. Not magical understanding. Just a better way to ask whether important system pieces exist in code or only in plans.

A Concrete Example: From Blind Refactor to Informed Change

Take a shared notification formatter used by billing emails, in-app alerts, and a failed-payment cron job.

Before the graph, an agent edits formatNotificationPayload() in isolation. It updates the direct unit tests and maybe one obvious caller. Looks fine. Hidden problem: two indirect callers depend on a field the refactor removed.

That’s where regressions come from. Not from dramatic mistakes. From missing one quiet path.

After the graph, the workflow changes. Before editing, the agent checks dependency paths and reachability.

Example output might look like this:

Function: formatNotificationPayloadRisk: Medium-HighDirect callers:- services/billing/sendInvoiceEmail.ts- services/alerts/createInAppAlert.tsTransitive impact:- POST /api/invoices/:id/pay- cron/failedPaymentRetry.tsNotes:- field "summary_text" consumed indirectly by createInAppAlert- change affects 4 downstream modules across 2 entry pointsThat’s enough context to make an intentional change. The agent can update related callers or at least flag the risk before touching the file.

Small detail, big difference.

Why Graph Queries Beat Token-Hungry Code Exploration

Without a structural layer, the agent often spends 40K tokens reading files just to guess how a subsystem fits together. A graph query can return the same architectural answer in roughly 2K tokens.

That gap matters every day.

You save on cost, but cost isn’t the main thing. The bigger win is reliability. If the answer comes from deterministic graph traversal, you don’t get a slightly different architecture story every session.

Some graph-backed systems also avoid LLM cost at query time after the repo is mapped. That’s a real operational difference. You map once, then ask many questions cheaply and repeatedly.

If your agent has to rediscover architecture from scratch in every session, your workflow is wasting both tokens and trust.

What a Knowledge Graph for Code Is Not

This helps to say plainly because skepticism here is healthy.

A knowledge graph for code is not:

- an IDE plugin by definition

- a coding assistant that writes code for you

- a code review bot by default

- a testing tool

It’s a structural intelligence layer. It makes other tools smarter.

That also means it’s different from adjacent categories. Code search platforms help you find files or snippets. Static analysis tools catch rule violations. Security scanners look for risk patterns. Quality frameworks help enforce standards and testing discipline.

Those all matter. They just solve different problems.

A graph helps you understand architecture and relationships. Linting, testing, and standards enforcement belong in a separate layer. If you’re tightening up the broader quality side, the AI Code Quality Framework is a useful reference.

Where Knowledge Graphs Help Most in Real Developer Workflows

The best use cases are not abstract. They show up in moments where mistakes are expensive and time is short.

Starting in an unfamiliar repo is the obvious one. You need module layout, dependency shape, and hot spots fast.

Before writing new code, function search matters more than people admit. Duplicate logic is one of the most common AI mistakes because “close enough” often looks original to a model.

Before a refactor, blast radius is the whole game. We’d rather know the risky edges up front than read about them in a bug report later.

After implementation, reachability checks are underrated. New code that isn’t connected to a real entry point is just a well-formatted dead end.

During PR review, structural risk often matters more than line-level style. A tiny diff can have ugly downstream effects.

During cleanup, graphs help find dead exports and repeated patterns across modules. In monorepo planning, they’re useful for spotting overlapping logic or copy-pasted subsystems across repos.

For a one-to-five-person team, that’s the point. You don’t have margin for avoidable architecture mistakes.

How MCP Changes the Way AI Agents Use Code Context

MCP is basically a standard way for AI tools to call external tools for context and actions. Practical, not mystical.

For code knowledge graphs, that changes the delivery model. The graph doesn’t live in a dashboard you check manually. It becomes available inside the coding session itself.

That’s the big shift.

In tools like Claude Code, Cursor, and Windsurf, an agent can query structure before making a change instead of reading random files until it feels confident. That’s a better workflow because the confidence is earned, not guessed.

Pharaoh is one implementation of this pattern. It exposes codebase intelligence through MCP so agents can ask structural questions directly while you work. If you already use an AI coding tool daily, adding this layer is less about changing your stack and more about fixing a missing input.

When a Knowledge Graph Is Worth the Complexity

Not every repo needs this on day one. A tiny throwaway script probably doesn’t.

It starts paying off when a few conditions show up together:

- the codebase is an active product, not a short-lived prototype

- your team uses AI agents daily

- module sprawl is growing

- refactors carry real regression risk

- people keep asking “what depends on this?”

A lower-priority case is a very small repo with shallow dependency depth and little reuse. In that world, the setup cost may not justify itself yet.

A simple test works well here. Count how often these happen in a month:

- duplicate logic appears

- AI-generated changes miss existing patterns

- someone asks whether a change is reachable in production

- a regression comes from misunderstood dependencies

If that count is non-trivial, the graph is probably worth it. The more interconnected the repo gets, the more useful it becomes.

How Pharaoh Applies This Idea in Practice

We built Pharaoh for this exact gap: AI agents are good at local edits and weak at repo-wide structure.

It converts repos into a queryable knowledge graph, parses TypeScript and Python with Tree-sitter, maps functions, modules, dependencies, endpoints, cron jobs, and environment variables, and exposes graph-backed tools through MCP.

The useful tools line up with the questions teams already ask:

- codebase mapping

- function search

- blast radius analysis

- dead code detection

- reachability checking

- dependency tracing

- cross-repo auditing

The practical differentiator is simple: after the initial mapping, those queries are deterministic graph lookups with no LLM cost per query. For solo founders and small AI teams, that means you can ask structural questions constantly without turning every session into a token burn.

If you already use Claude Code or Cursor, adding a codebase graph through MCP takes about two minutes. It doesn’t replace your editor, tests, or review habits. It gives your agent a better map.

Common Objections and the Right Way to Think About Them

The objections are reasonable.

“I can already grep the repo.”

Sure, but grep finds text. It does not tell you transitive impact or production reachability.

“My assistant already reads files.”

Reading files is not the same as understanding relationships across the system. Plenty of bad changes come from agents that read a lot and still miss the important path.

“This sounds like overkill.”

For tiny repos, maybe. For real products, mapping structure once is often cheaper than repeating blind edits all month.

“Isn’t this just another AI layer?”

Not if the answers come from parsed structure and deterministic traversal rather than fresh generation every time.

“Static analysis tools already exist.”

Yes, and they’re useful. But line-level rule checking and architectural graph traversal solve different problems. Don’t ask one tool category to do the other’s job.

Practical Adoption Checklist for an AI-Native Team

If you want to test this without turning it into a platform project, keep it narrow.

- Pick the repo where AI mistakes are most expensive.

- Write down the recurring questions grep answers poorly:

- what depends on this

- does this already exist

- is this reachable

- Add a structural context layer before your next refactor or PR review.

- Test it in one workflow first:

- refactoring sprint

- PR review

- new feature wiring check

- Pair structural intelligence with tests and quality checks. Don’t treat it as a replacement.

If you want a direct path, Pharaoh is one way to do this through MCP for Claude Code, Cursor, and similar tools.

The mindset shift is the real win: stop asking your agent to infer architecture from scratch every session.

A knowledge graph for code is a deterministic structural map. That’s why it helps. You get less duplicate code, safer refactors, better reachability checks, lower token waste, and more confidence when AI is touching real product code.

Better code decisions come from better structure, not better prompting alone.

Pick one active repo. Write down three architecture questions your agent struggles to answer today. Then test whether a graph-backed workflow can answer them before the next refactor or PR review.