Tree-Sitter Code Parsing Use Cases for AI Agents

Tree-sitter code parsing use cases sound obvious until your agent ships a neat refactor that duplicates an existing helper, misses two callers, and leaves new code hanging off nowhere. You don't need more file reads. You need structure.

What matters is whether your tooling can answer real repo questions before code gets written (or rewritten). Not parser theory. Actual decisions: where logic already lives, what breaks if you touch it, and whether a change is wired into anything that runs.

- Find existing functions before Cursor invents version #3

- Trace downstream callers before a "small" rename turns noisy

- Check reachability so shipped code is actually live

Read this and your agent stops guessing.

The Real Problem AI Agents Hit in Large Codebases

You’ve seen this one. Claude Code or Cursor starts a “small” refactor, misses the helper that already exists three folders over, writes a fresh version, then edits a shared function without noticing five downstream callers. The code looks plausible. That’s the dangerous part.

Most AI coding failures in real repos aren’t intelligence failures. They’re context failures. The model can write code. It just can’t reliably see how your codebase fits together before it writes anything.

That shows up in ways you already know:

- duplicate helper functions with slightly different behavior

- refactors that pass locally but break another path

- new code that never gets wired to an endpoint, cron, CLI, or event flow

- 40K tokens burned reading files one by one to answer a 2K question

- planning docs that assume architecture that doesn’t actually exist

The real question isn’t whether AI can generate code. It’s whether it can understand structure first.

That’s where tree-sitter code parsing use cases start to matter. Not as parser trivia. As infrastructure. Once code is parsed into structure, agents can stop wandering and start querying.

Better AI coding starts with better structure, not more generation.

What Tree-Sitter Actually Is and Why AI Agents Care

Tree-sitter is an incremental parsing system that turns source files into concrete syntax trees. In plain terms, it gives you a structured representation of code that tools can inspect reliably.

Three traits matter in agent workflows:

- it’s fast enough to run repeatedly as files change

- it can handle incomplete or broken code during editing

- it works across many languages through a fairly consistent interface

That second point matters more than people expect. Real coding sessions are messy. Half-written functions, syntax errors, renamed imports. If your parser falls over the moment a file is mid-edit, it’s not useful in an active Claude Code session.

There’s also a practical difference between a concrete syntax tree and an abstract syntax tree. ASTs usually distill meaning. Tree-sitter keeps source fidelity - exact locations, surrounding syntax, and node boundaries. That’s what lets tools map back to files, lines, declarations, and local context without guessing.

Still, tree-sitter alone is not architectural intelligence. It’s the parsing layer. The value comes from what you build on top of it.

Code is not just text to retrieve. It has declarations, imports, exports, call paths, module boundaries, entry points. If your agent only sees strings, it misses the shape of the system.

Why Parsing Beats File-by-File Guessing for Agentic Development

There are two ways agents gather context.

One is the default: read files, summarize them, read more files, infer relationships, hope nothing important was skipped. It works on tiny repos. It gets expensive and unreliable fast.

The other is structure first: parse once, model relationships, query what matters.

File-by-file exploration breaks down for boring reasons:

- it’s token-heavy

- it’s inconsistent across sessions

- it misses transitive dependencies easily

- it can’t answer architecture questions from local file context alone

We’ve found the difference is often something like 2K tokens of architectural context instead of 40K tokens of blind file exploration. That’s not a small optimization. That changes whether an agent behaves like a teammate or a tourist.

Deterministic parsing also changes trust. The same repo produces the same structural view. The parsing layer doesn’t hallucinate. Query-time lookups can be deterministic instead of LLM-generated guesses.

For solo founders and small teams, that matters. You don’t have spare time for subtle breakage introduced by a tool that couldn’t see the blast radius.

The Main Tree-Sitter Code Parsing Use Cases for AI Agents

This is the part that matters. Not parser theory. The jobs parsing unlocks.

Codebase mapping

Before an agent changes anything, it should know what exists. Parsing can build a structural map of files, functions, modules, dependencies, endpoints, cron jobs, and env vars.

That’s especially useful at the start of a session in an unfamiliar repo. The fastest way to waste an hour is to let the agent “explore” by opening random files.

Function and symbol discovery

Before writing a new utility, search for existing logic structurally. Not just with grep.

Parsed symbol discovery can resolve declarations, exports, and relationships. That’s how you avoid finding six functions named formatDate and missing the one actually used in production.

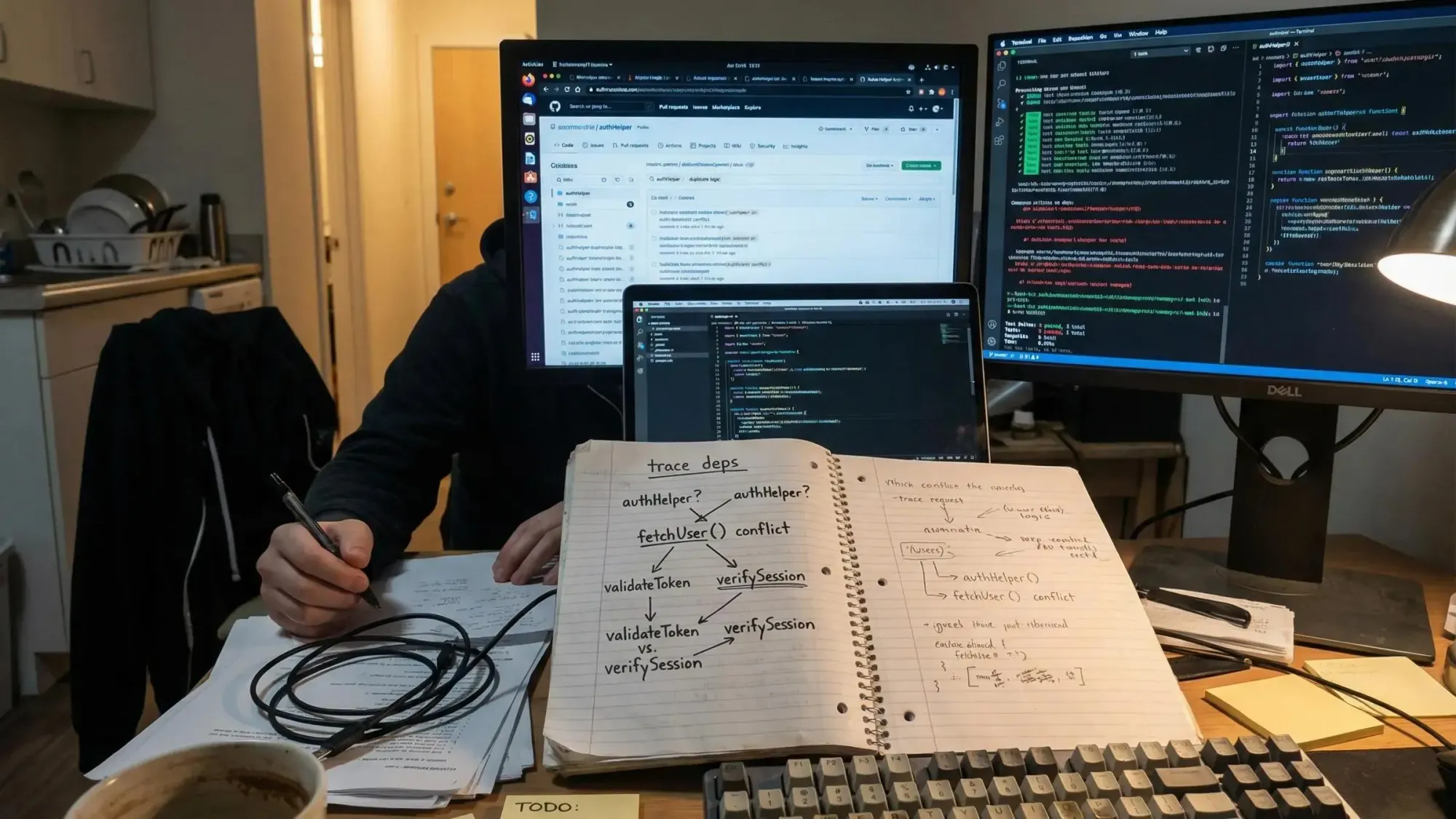

Dependency tracing and blast radius

These are related, but not the same. Dependency tracing asks how modules connect, including indirect paths. Blast radius asks what depends on this thing I’m about to touch.

You need both before:

- splitting modules

- extracting packages

- renaming shared utilities

- cleaning up circular dependencies

Direct caller search is usually too shallow. The nasty bugs live one layer past what was obvious.

Reachability and dead code

AI agents often write code that never gets invoked. It compiles. It may even test fine in isolation. It just isn’t connected to a real entry point.

Reachability checks ask whether a function is actually reachable from an endpoint, cron, CLI command, or event path. Dead code detection goes a step further and identifies code that isn’t reachable from real application structure.

That’s stronger than unused-symbol detection. A lot stronger.

Duplication, vision gaps, and cross-repo auditing

Three more use cases show up once a repo gets a little messy:

- consolidation and duplication detection across modules solving the same problem

- vision gap analysis between specs or PRDs and what the code actually implements

- cross-repo auditing to compare overlap during migrations or consolidation work

These are not edge cases. By the second afternoon of AI-assisted shipping, most teams have some version of all three.

How These Use Cases Show Up in Real AI Workflows

The value is easier to see in actual workflow moments.

When you start in an unfamiliar repo, get a structural map first. Then inspect the relevant module. Then search for existing functionality before generating anything new. That order matters. If you skip it, the agent starts inventing.

When refactoring a shared utility, inspect downstream callers, assess blast radius, make the change, then verify reachability after. That sequence reduces the old anxiety around touching code nobody has looked at in months.

PR review is another one. Text diffs are not enough. You want to know what changed structurally:

- did this affect an endpoint or just an internal helper?

- did a new export get added but never wired into the app?

- did this rename hit a shared module with transitive consumers?

Planning is where teams often miss the opportunity. Before writing a PRD or implementation plan, inspect current module boundaries and compare the proposed work against existing code. Small teams drift fast. Specs often assume something exists because it “probably does.”

And then there’s cleanup after AI-assisted shipping. That phase is real. Find duplicate logic. Identify dead code. Trace dependencies before deleting or consolidating. If you don’t, the repo slowly turns into a pile of near-matches.

Tree-Sitter Alone vs Tree-Sitter Plus a Knowledge Graph

This distinction matters.

Tree-sitter gives you syntax trees, exact source structure, and node-level data. Useful, but raw. It doesn’t automatically answer system questions like “what breaks if we change this?” or “is this path reachable from production?”

A graph layer adds the relationships that agents actually need:

- module-to-module links

- caller-callee chains

- entry-point tracing

- queryable architecture across the repo

That’s when questions become answerable in a way an agent can use:

- does this already exist?

- what breaks if I change this?

- is this code reachable?

- what from the spec is still missing?

Pharaoh is one way to do this. We parse TypeScript and Python with tree-sitter, store structural relationships in Neo4j, and expose them through MCP so tools like Claude Code, Cursor, Windsurf, and GitHub apps can query architecture directly. The practical advantage is simple: deterministic graph lookups at query time, with zero LLM cost per query after initial repo mapping. If that’s relevant, Pharaoh does this automatically via MCP at pharaoh.so.

What Tree-Sitter Is Good At and What It Does Not Solve by Itself

It’s worth being precise here because a lot of tooling language gets fuzzy.

Tree-sitter is strong at:

- syntax-aware indexing

- exact source locations

- error-tolerant parsing during editing

- multi-language structural foundations

It does not by itself give you:

- certainty about runtime behavior

- test execution

- code review judgment

- automatic bug fixing

- full architectural intelligence without extra modeling

That matters because some tools talk as if parsing alone solves code understanding. It doesn’t. Parsing gives you disciplined raw material.

When you evaluate anything built on tree-sitter, ask sharper questions:

- what structure gets extracted?

- how are relationships modeled?

- are results deterministic?

- how current is the index as the repo changes?

- can agents actually use the output in their workflow?

If the answers are vague, the product probably is too.

How to Evaluate Tree-Sitter-Based Infrastructure for AI Agents

If you’re skeptical, good. You should be.

Start with practical questions:

- Which languages are supported well today?

- Does it extract functions, modules, imports, exports, endpoints, env vars, and call chains?

- Can it trace dependencies transitively, not just directly?

- Does it support cross-repo analysis?

- How does it stay current as the repo changes?

- Is it accessible through MCP or another agent-friendly interface?

- Are results deterministic, or is an LLM involved on every query?

There are real tradeoffs across approaches. Text search is flexible but shallow. Embeddings help semantic recall but can miss architecture. Static analysis can be strict but doesn’t always fit day-to-day agent workflows. Graph-backed parsing tends to be better when your actual questions are about structure.

For small teams, the decision is usually simple. If you only need search, a graph might be overkill. If you need safe refactors, codebase-aware planning, and fewer blind edits, structure stops being optional.

Common Mistakes Teams Make When Adding Parsing to Agent Workflows

Most teams don’t fail because they picked parsing. They fail because they stop too early.

A few patterns show up over and over:

- treating parsing as a nice developer convenience instead of a control layer for AI changes

- assuming symbol search is enough without dependency tracing or reachability

- stopping at file-level indexing and never modeling module or call relationships

- using LLM summaries for architectural questions that should be answered deterministically

- forgetting planning workflows, where spec-to-code alignment matters before any code exists

- trapping the value in a dashboard instead of exposing it through MCP or another direct interface

One more mistake: mixing structural analysis with code quality and testing as if they’re the same thing. They aren’t. Structural context tells you what exists and how it connects. Linting, tests, and review checks tell you whether the change is correct. The open source AI Code Quality Framework covers that side at github.com/0xUXDesign/ai-code-quality-framework.

A Practical Architecture Pattern for AI-Native Teams

If you want a working model, keep it simple:

- Layer 1: parse code deterministically with tree-sitter

- Layer 2: normalize entities like functions, files, modules, imports, exports, endpoints, cron handlers, and env vars

- Layer 3: store relationships in a graph or similarly queryable structure

- Layer 4: expose those queries to agents through MCP

- Layer 5: pair structural context with coding, review, and planning workflows

This works well for small teams because it reduces token waste and makes context repeatable. Same repo, same answers. That’s the part people underestimate.

A practical loop looks like this:

- before writing a utility, query whether it already exists

- before refactoring, query blast radius

- after implementation, check reachability

That’s not fancy. It’s just disciplined.

Where Pharaoh Fits if You Want This Without Building It Yourself

If you don’t want to build the stack yourself, Pharaoh is one way to operationalize these tree-sitter code parsing use cases for AI agents.

We parse TypeScript and Python repos, map them into a Neo4j knowledge graph, and expose structural queries through MCP for Claude Code, Cursor, and Windsurf. That supports codebase mapping, function search, blast radius, dead code detection, dependency tracing, reachability, vision gaps, and cross-repo auditing.

The part that tends to matter most to this audience is query behavior. After the initial repo mapping, graph lookups are deterministic and don’t incur LLM cost per question. That makes it useful for solo founders shipping quickly, small teams doing frequent refactors, and repos where duplicate code and unsafe edits are starting to pile up. More at pharaoh.so.

What You Can Do Today to Make Your AI Agent Less Blind

Don’t start with a platform decision. Start with one repo and one repeated pain.

- Pick an active repo and list the questions your agent keeps getting wrong:

- what already exists?

- what depends on this?

- is this reachable?

- what is duplicated?

- Check how your setup answers those today. Text search? Embeddings? Human memory? That usually reveals the problem fast.

- Add a parsing-backed layer to one workflow first:

- refactoring

- PR review

- planning from a spec

- If you use Claude Code or another MCP-capable tool, add a codebase graph through MCP and compare it against blind file exploration.

- Pair structural analysis with code quality checks so architecture and correctness are both covered.

Tree-sitter code parsing use cases matter because agents need structure before they need more generation. The shift is practical: from file-by-file guessing to deterministic architectural context, from duplicated and unreachable code to search, tracing, and verification.

Try one parsing-backed workflow in a real repo this week. Refactoring is usually the best test. If your agent asks fewer blind questions and makes fewer expensive mistakes, you’ll know the difference quickly.